Date Published April 3, 2018 - Last Updated December 13, 2018

The goal of service management is to monitor and optimize the use of people, process, and tools to perform services that deliver the designed value. This goal is based on a working definition I created using a combination of the 2007 IT Infrastructure Library (ITIL) definitions of service and service management.

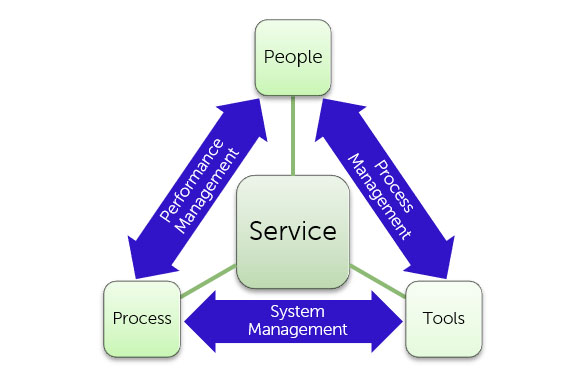

Many service managers are very familiar with the “service triangle,” with the three elements of people, process, and tools arranged around a central theme such as service delivery. Good practices dictate the use of an integrated set of service management activities that optimize the use of people, process, and tools.

In another HDI SupportWorld article, Combining People and Process Management for Optimal Service Management, I described linkages between these three elements:

When delivering services, people use processes and tools to achieve the desired outcomes. The processes themselves are supported by tools and used by people. Those tools should be designed to support the processes, and they should be easy to use. The primary linkages between these elements are system design, system training, and performance management.

As I’ve applied the concept of linking the service elements, I’ve evolved the linkages to performance management, process management, and system management. This article explores one aspect of performance management: measuring service quality.

A key mechanism for measuring service quality as part of performance management is to do quality control (QC) audits or checks of enough service transactions to get a reliable representation of their performance. There are several ways to perform these QC checks, including call monitoring (live or recorded), chat log or e-mail message reviews, and/or service ticket reviews.

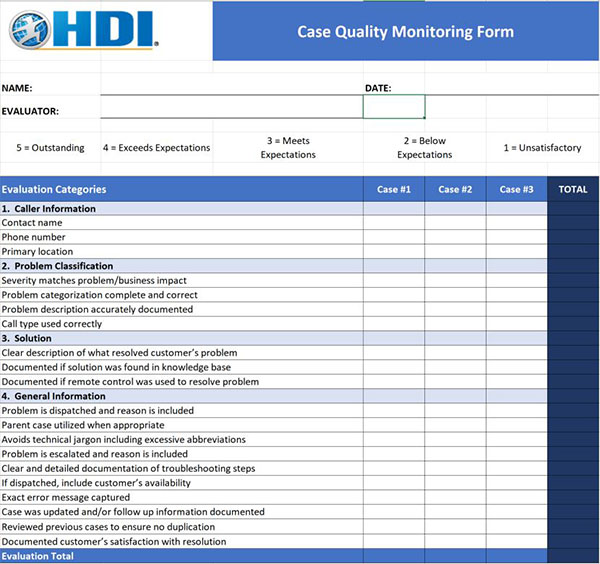

Every method of QC audit should be supported by a documented framework that is used to evaluate each service transaction. Service managers frequently employ a worksheet or checklist to record the QC audits. (Members of HDI who participate in the HDI Connect forum will find a Case Quality Monitoring Worksheet in the Resource Library.)

The key characteristics of the framework used for quality control audits are that the audit elements reflect performance standards that have been clearly communicated as requirements and included in agent or analyst training and are supported by the service- and knowledge-management tools that are in use.

Collecting and examining these requirements provides actionable analysis for performance management and coaching and continuous improvement. It is a good people-management practice to share individual results against group averages. While some may advocate for establishing a competitive dynamic within a team, unnecessary tension and conflicts can arise from sharing results that compare analysts or agents to each other.

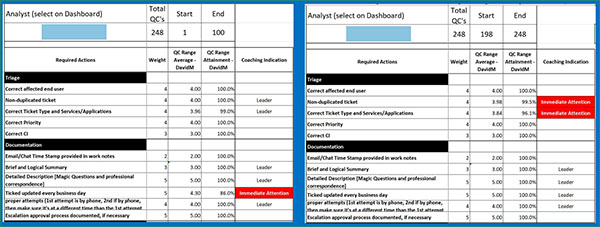

When analyzing an agent or analyst’s service quality audit data over time, a service manager can correct habits or behaviors that may deviate from the desired result. One successful managed service provider that I have worked with uses specific sampling rates for their QC process and delivers performance management feedback to their analysts based on the results.

The example above shows two different ranges of QC audits of a single analyst’s tickets over a period of time. You can see how the results from the first 100 QC audits revealed an opportunity to improve on the requirement to update tickets every business day. In the last 50 QC audits, the ticket updating requirement improved, but other improvement opportunities arose.

In addition to the opportunities to deliver effective coaching and performance counseling to individual agents or analysts, examining aggregated QC audit data can reveal training-, tools-, or process-related issues that need to be corrected for an entire team. For example, if 50 percent or more of your analysts are not choosing the correct ticket type, then you as a service manager probably have a training opportunity to reinforce the correct way to choose the type of ticket to use.

If 50% or more of your analysts are not choosing the correct ticket type, then you as a service manager probably have a training opportunity.

How Service Quality Relates to Business Value

The key value of a service provider is most effectively described as “what the service provider was hired to do.” In the case of IT service and support, it is most commonly reflected in the productivity of end users performing work supported by technology.

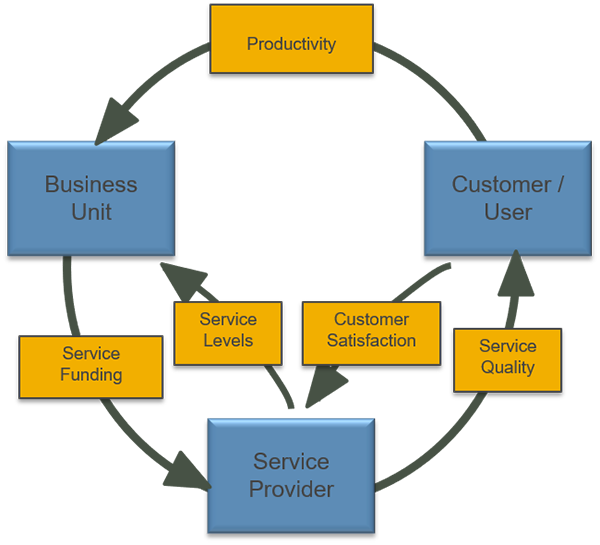

The value network diagram below can be a useful organizing principle to make sure that we, as service providers, are managing and measuring the right things to demonstrate value.

In this diagram, value is exchanged between a business unit, their end users, and a service provider. Those value interactions include funding (dollar value) and productivity (business value). The service provider demonstrates value in its service levels and its service quality, which is measured by the value reflected in customer satisfaction.

The value network diagram establishes service quality as a key component of the business value delivered by a service provider. By utilizing an effective quality control performance management process, service providers optimize their use of people to perform services that deliver the designed value.

How to Start Measuring Service Quality

For those with a good service management framework in place, the steps for a service manager to take to start measuring service quality can be simple:

- Integrate service quality measurements into your service management framework. Determine who will measure service quality, how they will measure it, and how often. You will then have the makings of a service quality measurement process.

- Take the service quality measurement process and integrate it into your performance management process. Determine who will analyze service quality and who will deliver coaching and performance feedback.

- Implement and continuously improve the process.

The steps above rely on a few key mechanisms: having a service management framework that is used to specify ongoing service management activities; having a performance management process to deliver coaching and performance feedback to analysts, and; not just measuring service quality, but having someone responsible for analyzing the results to take actions for continuous improvement. Before taking the steps above, be sure to have those key mechanisms ready to use.

Bill Payne is a results-driven IT leader and an expert in the design and delivery of cost-effective IT solutions that deliver quantifiable business benefits. His more than 30 years of experience at companies such as Pepsi-Cola, Whole Foods Market, and Dell, includes data communications consulting, messaging systems analyst, managing multiple infrastructure support and engineering teams, medical information systems deployment, retail and infrastructure systems management, organizational change management, and IT service management consulting. Leveraging his experience in leading, managing, and executing both technical and organizational transformation projects in numerous industries, Bill currently leads his own service management consulting company. Find him on

LinkedIn

, and follow him on Twitter

@ITSMConsultant

.